HDR in camera acquisition and 3D rendering has already been here for a long time. Now, it is time to see HDR on the screen as well.

This is a little introduction to the term HDR from the perspective of the media professionals that are used to be on set or location as well as being in post production houses as a client. Most likely they heard the term HDR, but most likely they judge images on screens that are SDR.

High dynamic range imagery has already been around for quite some time, but we usually compress (tone mapping) this image information to be displayed on standard dynamic range displays. Other times we simply loose this information somewhere on the “way” in post production. Therefore, you never got to see it, although nowadays more and more screens are capable of displaying HDR images.

In 2020, I finally got the chance to explore the topic of HDR content production. If you are interested in how I started this “journey”, you can read about it here.

In this present article I’d to try to explain how close we are already are to use of all the brightness that we capture or render. To have the best experience, I recommend you to read this article with Safari on an iPhoneX or newer.

SDR & HDR in direct comparisson

Before any further explanations, compare both images shown down from the slides below to the ones in the Vimeo clip. If your device is able to showcase this, you will notice a big difference between the still images and the clip in perceived brightness. While the clip is playing, you can change the slides as to match the images in the clip.

Too many terms: HD, UHD, SDR, HFR, etc.

What do the terms SDR and HDR stand for?

Maybe you have heard while working, someone asks you: “I thought we are working in HD or maybe UHD? And what is WTF is HFR?”

Let me introduce you to some of these terms:

HD (also called 2k) and UHD (4k) refer to pixel resolutions on a screen. HD has a resolution of 1920 x 1080 pixels and UHD has 4 times the above mentioned resolution which end up to be to 3840 x 2160 pixels. This game continues with 8K with another round of quadrupling the pixel count to arrive at a resolution of 7680 x 4320 pixels. This terms are not really “news”.

Depending on the size of the screen and the viewer’s distance to it, UHD and higher resolutions might not be distinguishable to the eye unless you are close enough to the screen.

So basically, if you can’t see any difference, why should you want it or make someone pay for it?

There are two more additional technologies to differentiate UHD from HD to make them look more interesting, newer or just different. Honestly, these additional technologies work technically just fine with a HD resolution, but they make more sense if they are all combined in a UHD or higher format.

Another term is high frame rate, HFR, with up to 120 frames per second at the moment. HFR was used in the latest movies by Ang Lee. In my opinion, 120 FPS brings a whole new experience for the viewer. “Motion blur”, which occurs when objects or the camera move very fast, is presented in 120 FPS with in many sharp motion steps, that the eye and brain create “motion blur”. This is much more realistic than a regular 24/25 FPS presentation. Just stop a recorded image of a fast moving object: It is blurred in the direction of the movement.

A film that is presented in 120 FPS or more means, that you can focus on every moving part in an image and see it without “motion blur” for a very brief moment (like looking out of a moving train). I had the opportunity to see a 15 minutes special presentation at the IBC convention in Amsterdam: The audience could experience a piece from a And Lee film in 120 FPS, stereoscopic 4K with a two laser projectors, one for each eye, which blew my mind away.

One term more: HDR

The technology I want to focus on is called HDR. The term HDR stands for high dynamic range, and with its introduction, another term was needed to describe a non HDR image. This new term is SDR, which stands for standard dynamic range. A SDR image is what will be shown to you on any professional monitor on set or location or in a post production house normally. Some of these screens are capable of displaying HDR content but, as long as no one asks for HDR, it is okay to work with SDR content, unless you work for OTT services like Netflix or Amazon Prime.

I think this will change rather sooner than later. Any Samsung, iPhone (since the iPhoneX) or other higher class smartphones from around the same time are most likely to have an HDR capable screen.

Example of an SDR image from an HDR source

Please note that the images on this page are NOT from an ARRI Alexa. I took them with my Canon 7D MKII in 2016 on a holiday trip. Because of the high contrast I took three exposures that I merged to one high dynamic range file later on. The dynamic range of this merged HDR file is similar to what an ARRI Alexa can capture at “once”. All the images were prepped in Nuke (ACES).

Let’s imagine we are on location in Barcelona with an ARRI Alexa and see a signal on a regular SDR (client) video monitor. The image might look like this:

This image is generated from a high dynamic range source and tone mapped and displayed on a low dynamic range (SDR) monitor. The tone mapping curve is the standard 3D-LUT from ARRI.

The brightness (means the amount of emission from the display) of regular SDR screens is limited. I can color grade an image to make it appear brighter, but only by making other elements darker. There is nothing “whiter” than “white” on a regular SDR screen. In the end, “white” doesn’t exist per se, but “white” is rather the maximum achromatic brightness that can be displayed on any type of screen.

Another type of “HDR” image that should be really called “extreme tone-mapping”.

Sadly this is or was also called an “HDR” image, although effectively this kind of SDR images are simply generated from a high dynamic range source. Then an extreme tone-mapping is applied to compress all the HDR data into an SDR image for an SDR display. The term “HDR image” is misleading in this case, because it only describes that every recorded HDR pixel is trying to be displayed at once. The resulting “look” is often unreal, although, at least for me, this image tries to show how my eye can explore every part of the frame and how every part is “well” exposed.

Gamut and Brightness (Nits)

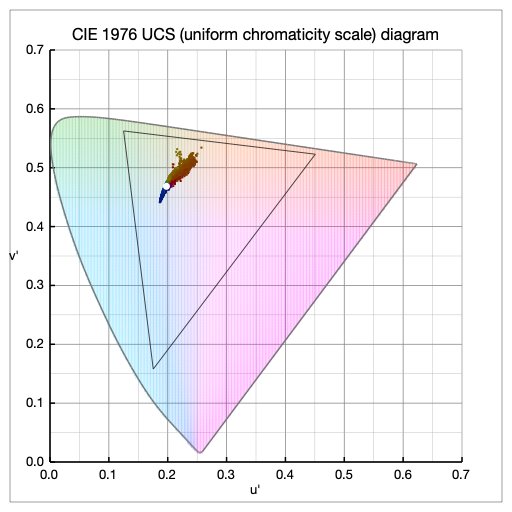

The color palette of SDR displays is usually limited to the gamut called Rec.709/sRGB for HD monitors. The triangle in the next diagram shows the maximum possible saturated colors for the gamut Rec.709/sRGB. The plotted pixels show that the scene from Barcelona, which was shot against the sun, has not really strong saturated colors. The small regular gamut for HD is more than sufficient for this image, although HDR content can display a much wider color gamut (DCI-P3 up to Rec.2020).

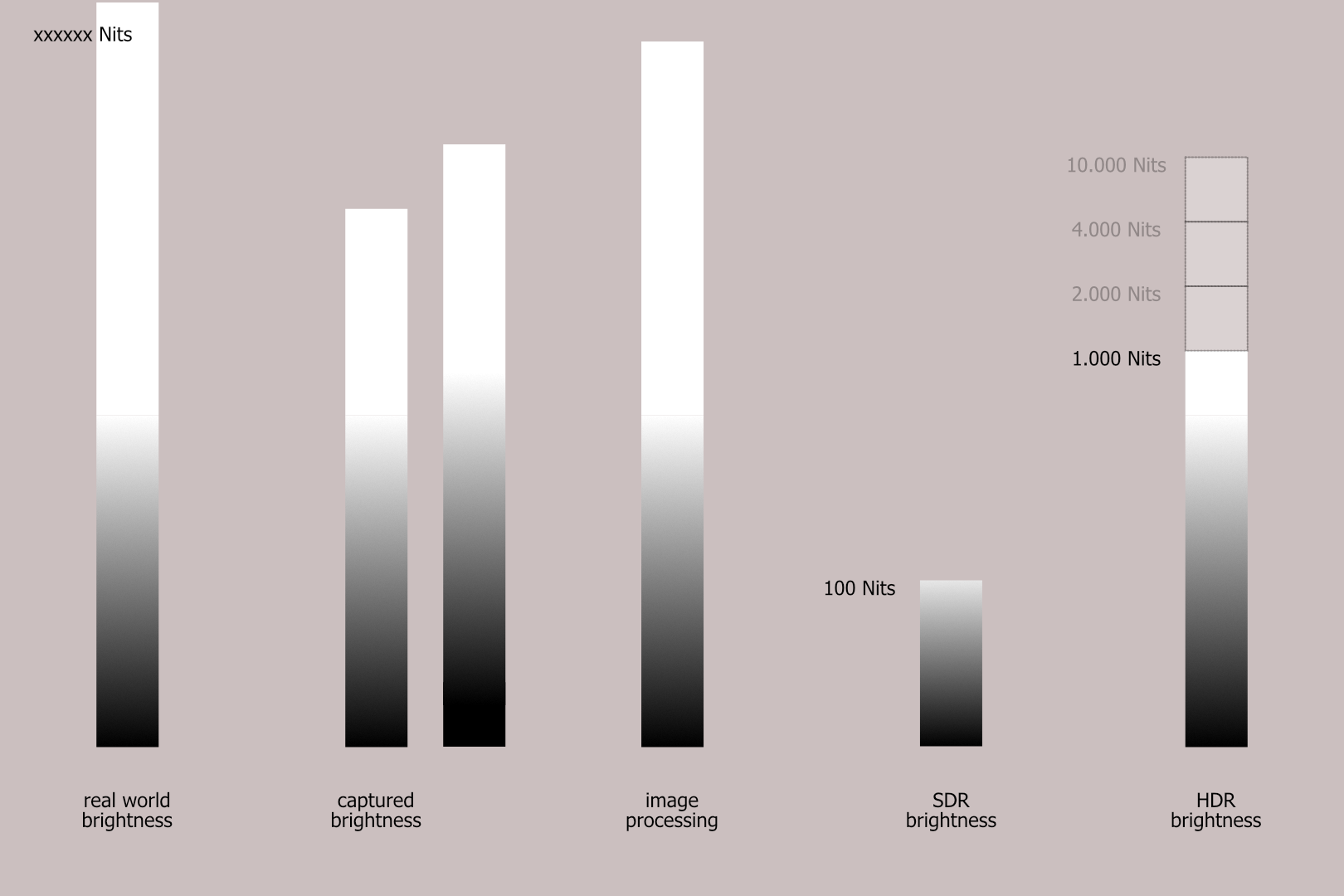

Furthermore, a SDR monitor can’t display more levels of brightness than it is defined in its standard. A video standard for HD uses a maximum brightness of 100 nits to display images.

Real world brightness and captured brightness

Imagine, that you stand in Barcelona on location under the bright evening sun: Shooting directly into sunlight makes it hard to capture an image on a regular SDR screen that can show how bright it was then.

The real world brightness exceeds the capability to be captured by any professional camera. With an adjustment of the exposure you can choose which the most important part of the “scene” is, the one that should end up being visible to the eye or usefully exposed on the sensor. What I have just explained, I tried to get across by showing two columns for the captured brightness.

The more you want to see into the “light”, the more you have to loose information in the dark areas, the shadows. But anyways, the captured brightness of an ARRI, RED, SONY, etc. camera is still a lot higher than an SDR monitor can display.

Image processing

Most grading and compositing tools allow to process the captured or generated image data with such a high dynamic range and precision, that you don’t need to worry about loosing any quality in this stage of image processing in post production.

SDR to HDR

I hope it’s clear when you look at the SDR column, that working on top of graded SDR images (to do retouches, compositing or additional grading work) means that a lot of the captured HDR data is already lost. It can’t also be brought back in a meaningful way for an HDR output.

Therefore, the final grading should be always the last step in post production, not the first. This is still today often the case, because of the old ways of thinking like it was to work with film, where a transfer from film to video was needed to start working on the images at all.

HDR displays with a maximum brightness of 1.000 nits allow parts of the image to be 10x more emissive than on a regular SDR display. The best standard from Dolby will allow even higher emissions from the displays in the future (another 10x, so 100 times more emissive than SDR).

HDR images displayed on an HDR display

As I can’t show an HDR image as a still frame on this page (yet), consider the two following SDR images as being a single HDR image, but displayed together at once. You can “see” the image on the left, plus you “see” at the same time the brightest parts from the image on the right on top of it. The average brightness of the image is not necessarily be higher than how it is displayed here on the left image, but the highlights can be up to 10x brighter than on a regular SDR screen.

To display HDR content, a different image encoding type and tone mapping curve is needed. One of these standards is called DolbyPQ and I used it for the creation of the Vimeo HDR content that is presented below.

Unless you view this page with Safari on an iPhoneX or higher, you won’t be able to see the HDR content that I linked here from Vimeo. Although there are many HDR displays, all the hard- and software parts need to support HDR content. Otherwise, only a SDR version will be presented.

On an iPad Pro for example, the embedded Vimeo clip is only showing the SDR version. To see the HDR version, you need to click the “vimeo” button down right which takes you to the Vimeo app. I am not sure why Apple does not support the HDR content directly in Safari on the iPad, although the default settings for HDR content in the Preferences/Safari/experimental features is set to “on”. But it does work on an iPhone.

You can find more HDR example clips here on the website or directly on my Vimeo & YouTube channels.

On HDR capable Android devices you can watch the HDR content from within the YouTube and VIMEO apps. Most likely HDR content is not supported when it is embedded into a website.

Last thoughts for now

The captured or generated material is nowadays already HDR in most cases, but the available high dynamic range data is usually not processed to be shown on HDR screens.

The grading step usually reduces, compresses and tone-maps the high dynamic range material for an SDR presentation.

If you want to generate an HDR output, you need to switch the grading system and the grading monitor to be able to properly display HDR content.

There are automatic and manual ways to derive a SDR version from an HDR master. If the automatic approach does not yield a good enough result, a manual trim pass with a SDR monitor attached to the grading system will let you create the best SDR version from an HDR master.

Recommendations & Links

I can recommend these two links if you want to look further and deeper into the subject of HDR content production. There you can find also descriptions of all the different flavors of HDR formats:

- https://www.finalcolor.com/colorblog/hdr-an-introduction

- https://www.lelabodejay.com/ (YouTube Videos in French & English)